AI agents are becoming “intentional” from a legal perspective. Here's what our research found

At Norm Ai, we are planning for much of the economy to be run by AI agents. In fact, we’re building the legal infrastructure to enable that.

Under current law, there is no clarity about how AI agents can comply in many consequential areas.

Take for example, intentionality. Across contract law, criminal law, and tort, intent does not require an analysis of inner mental states. It is a normative tool used to gate legal effect, allocate blame, and manage risk. If AI agents exhibit certain characteristics of intentionality, law can in many cases treat artificial intentionality as legally consequential without granting AI systems personhood, consciousness, or moral standing.

As AI models advance, they are generating more of the behavioral signals that law already uses to infer intent. So we've been developing intentionality scores to see how close we are, and we’ve been conducting legal research on the contours of where these signals allow AI agents to enter legal folds that were designed originally for humans. I will present this work at Stanford Law School's FutureLaw conference next week as part of my presentation on Legal AGI.

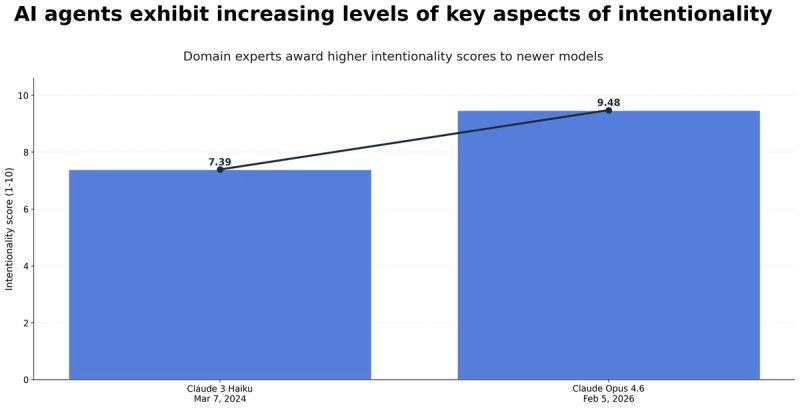

Along with Daniel Gervais (Professor at Vanderbilt University Law School), we studied how AI agents can increasingly generate conduct that appears intentional. AI engineers at Norm Ai and Norm Law found thatAnthropic’s models rose from an average intentionality score of 7.39 for Claude 3 Haiku (March 2024) to 9.48 for Claude Opus 4.6 (February 2026). That score is a functional measure provided by domain experts of legally relevant behavior: autonomy, goal persistence, reliance or assent signaling, and the extent to which the system's conduct departs from the initial prompt while still pursuing an intelligible objective.

The chart below shows that newer models increasingly generate the signals legal institutions already use to infer purposeful conduct.

Law treats intent functionally, not metaphysically. Criminal law, contract law, and tort law already infer intent from outward behavior, persistence, adaptation, and induced reliance and do not require proof of a literal mind. Our work suggests that AI agents are producing more of those signals over time. Therefore, depending on the deployment of AI agents, their conduct can be increasingly treated as legally consequential within these key frameworks that require intention of the actors involved.